Coaching a convnet with a small dataset

Having to coach an image-classification mannequin utilizing little or no knowledge is a standard state of affairs, which you’ll seemingly encounter in follow if you happen to ever do pc imaginative and prescient in an expert context. A “few” samples can imply wherever from just a few hundred to some tens of hundreds of photos. As a sensible instance, we’ll deal with classifying photos as canine or cats, in a dataset containing 4,000 footage of cats and canine (2,000 cats, 2,000 canine). We’ll use 2,000 footage for coaching – 1,000 for validation, and 1,000 for testing.

In Chapter 5 of the Deep Studying with R e book we overview three methods for tackling this drawback. The primary of those is coaching a small mannequin from scratch on what little knowledge you’ve gotten (which achieves an accuracy of 82%). Subsequently we use characteristic extraction with a pretrained community (leading to an accuracy of 90%) and fine-tuning a pretrained community (with a closing accuracy of 97%). On this submit we’ll cowl solely the second and third methods.

The relevance of deep studying for small-data issues

You’ll generally hear that deep studying solely works when a number of knowledge is offered. That is legitimate partially: one elementary attribute of deep studying is that it might probably discover attention-grabbing options within the coaching knowledge by itself, with none want for handbook characteristic engineering, and this may solely be achieved when a number of coaching examples can be found. That is very true for issues the place the enter samples are very high-dimensional, like photos.

However what constitutes a number of samples is relative – relative to the dimensions and depth of the community you’re making an attempt to coach, for starters. It isn’t doable to coach a convnet to resolve a fancy drawback with just some tens of samples, however just a few hundred can probably suffice if the mannequin is small and effectively regularized and the duty is easy. As a result of convnets study native, translation-invariant options, they’re extremely knowledge environment friendly on perceptual issues. Coaching a convnet from scratch on a really small picture dataset will nonetheless yield cheap outcomes regardless of a relative lack of knowledge, with out the necessity for any customized characteristic engineering. You’ll see this in motion on this part.

What’s extra, deep-learning fashions are by nature extremely repurposable: you’ll be able to take, say, an image-classification or speech-to-text mannequin educated on a large-scale dataset and reuse it on a considerably completely different drawback with solely minor adjustments. Particularly, within the case of pc imaginative and prescient, many pretrained fashions (normally educated on the ImageNet dataset) at the moment are publicly obtainable for obtain and can be utilized to bootstrap highly effective imaginative and prescient fashions out of little or no knowledge. That’s what you’ll do within the subsequent part. Let’s begin by getting your palms on the info.

Downloading the info

The Canine vs. Cats dataset that you simply’ll use isn’t packaged with Keras. It was made obtainable by Kaggle as a part of a computer-vision competitors in late 2013, again when convnets weren’t mainstream. You may obtain the unique dataset from https://www.kaggle.com/c/dogs-vs-cats/knowledge (you’ll have to create a Kaggle account if you happen to don’t have already got one – don’t fear, the method is painless).

The images are medium-resolution colour JPEGs. Listed here are some examples:

Unsurprisingly, the dogs-versus-cats Kaggle competitors in 2013 was received by entrants who used convnets. The perfect entries achieved as much as 95% accuracy. Beneath you’ll find yourself with a 97% accuracy, though you’ll prepare your fashions on lower than 10% of the info that was obtainable to the opponents.

This dataset comprises 25,000 photos of canine and cats (12,500 from every class) and is 543 MB (compressed). After downloading and uncompressing it, you’ll create a brand new dataset containing three subsets: a coaching set with 1,000 samples of every class, a validation set with 500 samples of every class, and a check set with 500 samples of every class.

Following is the code to do that:

original_dataset_dir <- "~/Downloads/kaggle_original_data"

base_dir <- "~/Downloads/cats_and_dogs_small"

dir.create(base_dir)

train_dir <- file.path(base_dir, "prepare")

dir.create(train_dir)

validation_dir <- file.path(base_dir, "validation")

dir.create(validation_dir)

test_dir <- file.path(base_dir, "check")

dir.create(test_dir)

train_cats_dir <- file.path(train_dir, "cats")

dir.create(train_cats_dir)

train_dogs_dir <- file.path(train_dir, "canine")

dir.create(train_dogs_dir)

validation_cats_dir <- file.path(validation_dir, "cats")

dir.create(validation_cats_dir)

validation_dogs_dir <- file.path(validation_dir, "canine")

dir.create(validation_dogs_dir)

test_cats_dir <- file.path(test_dir, "cats")

dir.create(test_cats_dir)

test_dogs_dir <- file.path(test_dir, "canine")

dir.create(test_dogs_dir)

fnames <- paste0("cat.", 1:1000, ".jpg")

file.copy(file.path(original_dataset_dir, fnames),

file.path(train_cats_dir))

fnames <- paste0("cat.", 1001:1500, ".jpg")

file.copy(file.path(original_dataset_dir, fnames),

file.path(validation_cats_dir))

fnames <- paste0("cat.", 1501:2000, ".jpg")

file.copy(file.path(original_dataset_dir, fnames),

file.path(test_cats_dir))

fnames <- paste0("canine.", 1:1000, ".jpg")

file.copy(file.path(original_dataset_dir, fnames),

file.path(train_dogs_dir))

fnames <- paste0("canine.", 1001:1500, ".jpg")

file.copy(file.path(original_dataset_dir, fnames),

file.path(validation_dogs_dir))

fnames <- paste0("canine.", 1501:2000, ".jpg")

file.copy(file.path(original_dataset_dir, fnames),

file.path(test_dogs_dir))Utilizing a pretrained convnet

A standard and extremely efficient strategy to deep studying on small picture datasets is to make use of a pretrained community. A pretrained community is a saved community that was beforehand educated on a big dataset, sometimes on a large-scale image-classification process. If this unique dataset is massive sufficient and basic sufficient, then the spatial hierarchy of options discovered by the pretrained community can successfully act as a generic mannequin of the visible world, and therefore its options can show helpful for a lot of completely different computer-vision issues, though these new issues could contain utterly completely different courses than these of the unique process. For example, you may prepare a community on ImageNet (the place courses are largely animals and on a regular basis objects) after which repurpose this educated community for one thing as distant as figuring out furnishings objects in photos. Such portability of discovered options throughout completely different issues is a key benefit of deep studying in comparison with many older, shallow-learning approaches, and it makes deep studying very efficient for small-data issues.

On this case, let’s think about a big convnet educated on the ImageNet dataset (1.4 million labeled photos and 1,000 completely different courses). ImageNet comprises many animal courses, together with completely different species of cats and canine, and you may thus anticipate to carry out effectively on the dogs-versus-cats classification drawback.

You’ll use the VGG16 structure, developed by Karen Simonyan and Andrew Zisserman in 2014; it’s a easy and broadly used convnet structure for ImageNet. Though it’s an older mannequin, removed from the present state-of-the-art and considerably heavier than many different latest fashions, I selected it as a result of its structure is just like what you’re already accustomed to and is straightforward to grasp with out introducing any new ideas. This can be your first encounter with one among these cutesy mannequin names – VGG, ResNet, Inception, Inception-ResNet, Xception, and so forth; you’ll get used to them, as a result of they are going to come up often if you happen to maintain doing deep studying for pc imaginative and prescient.

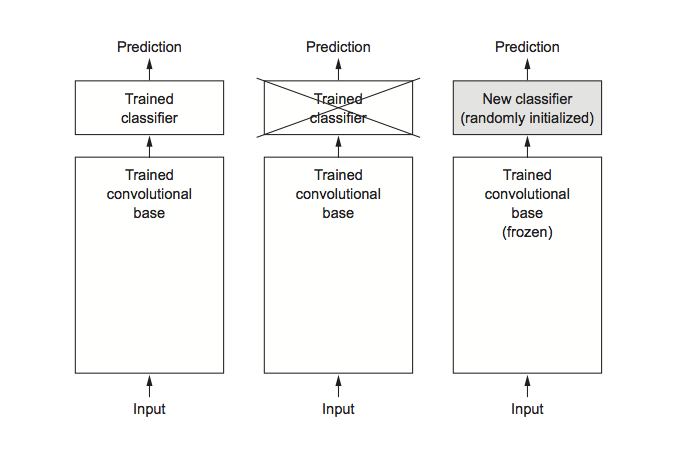

There are two methods to make use of a pretrained community: characteristic extraction and fine-tuning. We’ll cowl each of them. Let’s begin with characteristic extraction.

Function extraction consists of utilizing the representations discovered by a earlier community to extract attention-grabbing options from new samples. These options are then run via a brand new classifier, which is educated from scratch.

As you noticed beforehand, convnets used for picture classification comprise two components: they begin with a collection of pooling and convolution layers, they usually finish with a densely linked classifier. The primary half is known as the convolutional base of the mannequin. Within the case of convnets, characteristic extraction consists of taking the convolutional base of a beforehand educated community, working the brand new knowledge via it, and coaching a brand new classifier on high of the output.

Why solely reuse the convolutional base? Might you reuse the densely linked classifier as effectively? Usually, doing so needs to be averted. The reason being that the representations discovered by the convolutional base are prone to be extra generic and due to this fact extra reusable: the characteristic maps of a convnet are presence maps of generic ideas over an image, which is prone to be helpful whatever the computer-vision drawback at hand. However the representations discovered by the classifier will essentially be particular to the set of courses on which the mannequin was educated – they are going to solely comprise details about the presence chance of this or that class in the whole image. Moreover, representations present in densely linked layers now not comprise any details about the place objects are situated within the enter picture: these layers eliminate the notion of area, whereas the item location continues to be described by convolutional characteristic maps. For issues the place object location issues, densely linked options are largely ineffective.

Word that the extent of generality (and due to this fact reusability) of the representations extracted by particular convolution layers depends upon the depth of the layer within the mannequin. Layers that come earlier within the mannequin extract native, extremely generic characteristic maps (equivalent to visible edges, colours, and textures), whereas layers which can be greater up extract more-abstract ideas (equivalent to “cat ear” or “canine eye”). So in case your new dataset differs quite a bit from the dataset on which the unique mannequin was educated, you could be higher off utilizing solely the primary few layers of the mannequin to do characteristic extraction, relatively than utilizing the whole convolutional base.

On this case, as a result of the ImageNet class set comprises a number of canine and cat courses, it’s prone to be helpful to reuse the knowledge contained within the densely linked layers of the unique mannequin. However we’ll select to not, with a purpose to cowl the extra basic case the place the category set of the brand new drawback doesn’t overlap the category set of the unique mannequin.

Let’s put this in follow by utilizing the convolutional base of the VGG16 community, educated on ImageNet, to extract attention-grabbing options from cat and canine photos, after which prepare a dogs-versus-cats classifier on high of those options.

The VGG16 mannequin, amongst others, comes prepackaged with Keras. Right here’s the listing of image-classification fashions (all pretrained on the ImageNet dataset) which can be obtainable as a part of Keras:

- Xception

- Inception V3

- ResNet50

- VGG16

- VGG19

- MobileNet

Let’s instantiate the VGG16 mannequin.

You move three arguments to the operate:

weightsspecifies the load checkpoint from which to initialize the mannequin.include_toprefers to together with (or not) the densely linked classifier on high of the community. By default, this densely linked classifier corresponds to the 1,000 courses from ImageNet. Since you intend to make use of your personal densely linked classifier (with solely two courses:catandcanine), you don’t want to incorporate it.input_shapeis the form of the picture tensors that you simply’ll feed to the community. This argument is solely non-obligatory: if you happen to don’t move it, the community will be capable to course of inputs of any measurement.

Right here’s the element of the structure of the VGG16 convolutional base. It’s just like the easy convnets you’re already accustomed to:

Layer (kind) Output Form Param #

================================================================

input_1 (InputLayer) (None, 150, 150, 3) 0

________________________________________________________________

block1_conv1 (Convolution2D) (None, 150, 150, 64) 1792

________________________________________________________________

block1_conv2 (Convolution2D) (None, 150, 150, 64) 36928

________________________________________________________________

block1_pool (MaxPooling2D) (None, 75, 75, 64) 0

________________________________________________________________

block2_conv1 (Convolution2D) (None, 75, 75, 128) 73856

________________________________________________________________

block2_conv2 (Convolution2D) (None, 75, 75, 128) 147584

________________________________________________________________

block2_pool (MaxPooling2D) (None, 37, 37, 128) 0

________________________________________________________________

block3_conv1 (Convolution2D) (None, 37, 37, 256) 295168

________________________________________________________________

block3_conv2 (Convolution2D) (None, 37, 37, 256) 590080

________________________________________________________________

block3_conv3 (Convolution2D) (None, 37, 37, 256) 590080

________________________________________________________________

block3_pool (MaxPooling2D) (None, 18, 18, 256) 0

________________________________________________________________

block4_conv1 (Convolution2D) (None, 18, 18, 512) 1180160

________________________________________________________________

block4_conv2 (Convolution2D) (None, 18, 18, 512) 2359808

________________________________________________________________

block4_conv3 (Convolution2D) (None, 18, 18, 512) 2359808

________________________________________________________________

block4_pool (MaxPooling2D) (None, 9, 9, 512) 0

________________________________________________________________

block5_conv1 (Convolution2D) (None, 9, 9, 512) 2359808

________________________________________________________________

block5_conv2 (Convolution2D) (None, 9, 9, 512) 2359808

________________________________________________________________

block5_conv3 (Convolution2D) (None, 9, 9, 512) 2359808

________________________________________________________________

block5_pool (MaxPooling2D) (None, 4, 4, 512) 0

================================================================

Complete params: 14,714,688

Trainable params: 14,714,688

Non-trainable params: 0The ultimate characteristic map has form (4, 4, 512). That’s the characteristic on high of which you’ll stick a densely linked classifier.

At this level, there are two methods you would proceed:

-

Working the convolutional base over your dataset, recording its output to an array on disk, after which utilizing this knowledge as enter to a standalone, densely linked classifier just like these you noticed partially 1 of this e book. This resolution is quick and low cost to run, as a result of it solely requires working the convolutional base as soon as for each enter picture, and the convolutional base is by far the most costly a part of the pipeline. However for a similar purpose, this system received’t will let you use knowledge augmentation.

-

Extending the mannequin you’ve gotten (

conv_base) by including dense layers on high, and working the entire thing finish to finish on the enter knowledge. It will will let you use knowledge augmentation, as a result of each enter picture goes via the convolutional base each time it’s seen by the mannequin. However for a similar purpose, this system is way dearer than the primary.

On this submit we’ll cowl the second approach intimately (within the e book we cowl each). Word that this system is so costly that you must solely try it you probably have entry to a GPU – it’s completely intractable on a CPU.

As a result of fashions behave identical to layers, you’ll be able to add a mannequin (like conv_base) to a sequential mannequin identical to you’d add a layer.

mannequin <- keras_model_sequential() %>%

conv_base %>%

layer_flatten() %>%

layer_dense(models = 256, activation = "relu") %>%

layer_dense(models = 1, activation = "sigmoid")That is what the mannequin appears like now:

Layer (kind) Output Form Param #

================================================================

vgg16 (Mannequin) (None, 4, 4, 512) 14714688

________________________________________________________________

flatten_1 (Flatten) (None, 8192) 0

________________________________________________________________

dense_1 (Dense) (None, 256) 2097408

________________________________________________________________

dense_2 (Dense) (None, 1) 257

================================================================

Complete params: 16,812,353

Trainable params: 16,812,353

Non-trainable params: 0As you’ll be able to see, the convolutional base of VGG16 has 14,714,688 parameters, which may be very massive. The classifier you’re including on high has 2 million parameters.

Earlier than you compile and prepare the mannequin, it’s crucial to freeze the convolutional base. Freezing a layer or set of layers means stopping their weights from being up to date throughout coaching. If you happen to don’t do that, then the representations that have been beforehand discovered by the convolutional base will probably be modified throughout coaching. As a result of the dense layers on high are randomly initialized, very massive weight updates can be propagated via the community, successfully destroying the representations beforehand discovered.

In Keras, you freeze a community utilizing the freeze_weights() operate:

size(mannequin$trainable_weights)[1] 30freeze_weights(conv_base)

size(mannequin$trainable_weights)[1] 4With this setup, solely the weights from the 2 dense layers that you simply added will probably be educated. That’s a complete of 4 weight tensors: two per layer (the principle weight matrix and the bias vector). Word that to ensure that these adjustments to take impact, you will need to first compile the mannequin. If you happen to ever modify weight trainability after compilation, you must then recompile the mannequin, or these adjustments will probably be ignored.

Utilizing knowledge augmentation

Overfitting is brought on by having too few samples to study from, rendering you unable to coach a mannequin that may generalize to new knowledge. Given infinite knowledge, your mannequin can be uncovered to each doable side of the info distribution at hand: you’d by no means overfit. Information augmentation takes the strategy of producing extra coaching knowledge from current coaching samples, by augmenting the samples by way of quite a few random transformations that yield believable-looking photos. The purpose is that at coaching time, your mannequin won’t ever see the very same image twice. This helps expose the mannequin to extra facets of the info and generalize higher.

In Keras, this may be achieved by configuring quite a few random transformations to be carried out on the pictures learn by an image_data_generator(). For instance:

train_datagen = image_data_generator(

rescale = 1/255,

rotation_range = 40,

width_shift_range = 0.2,

height_shift_range = 0.2,

shear_range = 0.2,

zoom_range = 0.2,

horizontal_flip = TRUE,

fill_mode = "nearest"

)These are just some of the choices obtainable (for extra, see the Keras documentation). Let’s rapidly go over this code:

rotation_rangeis a worth in levels (0–180), a spread inside which to randomly rotate footage.width_shiftandheight_shiftare ranges (as a fraction of complete width or peak) inside which to randomly translate footage vertically or horizontally.shear_rangeis for randomly making use of shearing transformations.zoom_rangeis for randomly zooming inside footage.horizontal_flipis for randomly flipping half the pictures horizontally – related when there aren’t any assumptions of horizontal asymmetry (for instance, real-world footage).fill_modeis the technique used for filling in newly created pixels, which may seem after a rotation or a width/peak shift.

Now we will prepare our mannequin utilizing the picture knowledge generator:

# Word that the validation knowledge should not be augmented!

test_datagen <- image_data_generator(rescale = 1/255)

train_generator <- flow_images_from_directory(

train_dir, # Goal listing

train_datagen, # Information generator

target_size = c(150, 150), # Resizes all photos to 150 × 150

batch_size = 20,

class_mode = "binary" # binary_crossentropy loss for binary labels

)

validation_generator <- flow_images_from_directory(

validation_dir,

test_datagen,

target_size = c(150, 150),

batch_size = 20,

class_mode = "binary"

)

mannequin %>% compile(

loss = "binary_crossentropy",

optimizer = optimizer_rmsprop(lr = 2e-5),

metrics = c("accuracy")

)

historical past <- mannequin %>% fit_generator(

train_generator,

steps_per_epoch = 100,

epochs = 30,

validation_data = validation_generator,

validation_steps = 50

)Let’s plot the outcomes. As you’ll be able to see, you attain a validation accuracy of about 90%.

Fantastic-tuning

One other broadly used approach for mannequin reuse, complementary to characteristic extraction, is fine-tuning

Fantastic-tuning consists of unfreezing just a few of the highest layers of a frozen mannequin base used for characteristic extraction, and collectively coaching each the newly added a part of the mannequin (on this case, the absolutely linked classifier) and these high layers. That is referred to as fine-tuning as a result of it barely adjusts the extra summary

representations of the mannequin being reused, with a purpose to make them extra related for the issue at hand.

I acknowledged earlier that it’s essential to freeze the convolution base of VGG16 so as to have the ability to prepare a randomly initialized classifier on high. For a similar purpose, it’s solely doable to fine-tune the highest layers of the convolutional base as soon as the classifier on high has already been educated. If the classifier isn’t already educated, then the error sign propagating via the community throughout coaching will probably be too massive, and the representations beforehand discovered by the layers being fine-tuned will probably be destroyed. Thus the steps for fine-tuning a community are as follows:

- Add your customized community on high of an already-trained base community.

- Freeze the bottom community.

- Prepare the half you added.

- Unfreeze some layers within the base community.

- Collectively prepare each these layers and the half you added.

You already accomplished the primary three steps when doing characteristic extraction. Let’s proceed with step 4: you’ll unfreeze your conv_base after which freeze particular person layers inside it.

As a reminder, that is what your convolutional base appears like:

Layer (kind) Output Form Param #

================================================================

input_1 (InputLayer) (None, 150, 150, 3) 0

________________________________________________________________

block1_conv1 (Convolution2D) (None, 150, 150, 64) 1792

________________________________________________________________

block1_conv2 (Convolution2D) (None, 150, 150, 64) 36928

________________________________________________________________

block1_pool (MaxPooling2D) (None, 75, 75, 64) 0

________________________________________________________________

block2_conv1 (Convolution2D) (None, 75, 75, 128) 73856

________________________________________________________________

block2_conv2 (Convolution2D) (None, 75, 75, 128) 147584

________________________________________________________________

block2_pool (MaxPooling2D) (None, 37, 37, 128) 0

________________________________________________________________

block3_conv1 (Convolution2D) (None, 37, 37, 256) 295168

________________________________________________________________

block3_conv2 (Convolution2D) (None, 37, 37, 256) 590080

________________________________________________________________

block3_conv3 (Convolution2D) (None, 37, 37, 256) 590080

________________________________________________________________

block3_pool (MaxPooling2D) (None, 18, 18, 256) 0

________________________________________________________________

block4_conv1 (Convolution2D) (None, 18, 18, 512) 1180160

________________________________________________________________

block4_conv2 (Convolution2D) (None, 18, 18, 512) 2359808

________________________________________________________________

block4_conv3 (Convolution2D) (None, 18, 18, 512) 2359808

________________________________________________________________

block4_pool (MaxPooling2D) (None, 9, 9, 512) 0

________________________________________________________________

block5_conv1 (Convolution2D) (None, 9, 9, 512) 2359808

________________________________________________________________

block5_conv2 (Convolution2D) (None, 9, 9, 512) 2359808

________________________________________________________________

block5_conv3 (Convolution2D) (None, 9, 9, 512) 2359808

________________________________________________________________

block5_pool (MaxPooling2D) (None, 4, 4, 512) 0

================================================================

Complete params: 14714688You’ll fine-tune the entire layers from block3_conv1 and on. Why not fine-tune the whole convolutional base? You could possibly. However you must think about the next:

- Earlier layers within the convolutional base encode more-generic, reusable options, whereas layers greater up encode more-specialized options. It’s extra helpful to fine-tune the extra specialised options, as a result of these are those that must be repurposed in your new drawback. There can be fast-decreasing returns in fine-tuning decrease layers.

- The extra parameters you’re coaching, the extra you’re prone to overfitting. The convolutional base has 15 million parameters, so it will be dangerous to try to coach it in your small dataset.

Thus, on this state of affairs, it’s a great technique to fine-tune solely among the layers within the convolutional base. Let’s set this up, ranging from the place you left off within the earlier instance.

unfreeze_weights(conv_base, from = "block3_conv1")Now you’ll be able to start fine-tuning the community. You’ll do that with the RMSProp optimizer, utilizing a really low studying charge. The explanation for utilizing a low studying charge is that you simply wish to restrict the magnitude of the modifications you make to the representations of the three layers you’re fine-tuning. Updates which can be too massive could hurt these representations.

mannequin %>% compile(

loss = "binary_crossentropy",

optimizer = optimizer_rmsprop(lr = 1e-5),

metrics = c("accuracy")

)

historical past <- mannequin %>% fit_generator(

train_generator,

steps_per_epoch = 100,

epochs = 100,

validation_data = validation_generator,

validation_steps = 50

)Let’s plot our outcomes:

You’re seeing a pleasant 6% absolute enchancment in accuracy, from about 90% to above 96%.

Word that the loss curve doesn’t present any actual enchancment (in actual fact, it’s deteriorating). It’s possible you’ll marvel, how may accuracy keep secure or enhance if the loss isn’t lowering? The reply is easy: what you show is a median of pointwise loss values; however what issues for accuracy is the distribution of the loss values, not their common, as a result of accuracy is the results of a binary thresholding of the category chance predicted by the mannequin. The mannequin should be bettering even when this isn’t mirrored within the common loss.

Now you can lastly consider this mannequin on the check knowledge:

test_generator <- flow_images_from_directory(

test_dir,

test_datagen,

target_size = c(150, 150),

batch_size = 20,

class_mode = "binary"

)mannequin %>% evaluate_generator(test_generator, steps = 50)$loss

[1] 0.2158171

$acc

[1] 0.965Right here you get a check accuracy of 96.5%. Within the unique Kaggle competitors round this dataset, this may have been one of many high outcomes. However utilizing trendy deep-learning methods, you managed to achieve this outcome utilizing solely a small fraction of the coaching knowledge obtainable (about 10%). There’s a big distinction between having the ability to prepare on 20,000 samples in comparison with 2,000 samples!

Take-aways: utilizing convnets with small datasets

Right here’s what you must take away from the workouts prior to now two sections:

- Convnets are the most effective kind of machine-learning fashions for computer-vision duties. It’s doable to coach one from scratch even on a really small dataset, with respectable outcomes.

- On a small dataset, overfitting would be the important difficulty. Information augmentation is a strong solution to struggle overfitting once you’re working with picture knowledge.

- It’s simple to reuse an current convnet on a brand new dataset by way of characteristic extraction. This can be a priceless approach for working with small picture datasets.

- As a complement to characteristic extraction, you should use fine-tuning, which adapts to a brand new drawback among the representations beforehand discovered by an current mannequin. This pushes efficiency a bit additional.

Now you’ve gotten a stable set of instruments for coping with image-classification issues – particularly with small datasets.